HA Load Balancing

Last Modified: 12/11/2013 at 13:56 | Release:Table of Contents

Overview

HA Load Balancing is a new feature that was added in R3.1, which allows you to distribute VMs across both PMs to improve performance and availability. It is a preference that can be enabled on the Preferences > System Resources page of the Avance Management Console. The load balancing is configured per VM and can be done automatically or by user assignment. If a PM is out of service all VMs will run on the surviving PM. VMs will automatically migrate back as soon as the PM they are targeted to run on is returned to service and fully synchronized.

Enabling/Disabling

- Go to the Preferences > System Resources > page.

- Click enable or disable under HA Load Balancing

- And Save settings.

When HA Load Balancing is enabled from the Preference page, Avance will detect if the VMs can be automatically balanced and prompt the user before proceeding. If you click “YES” it will immediately trigger VM migrations to balance the system. If you prefer to manually assign VMs or defer the migrations for a later time then you can simply click “No” at the prompt.

Note: that if you are upgrading to release R3.1 then you must re-activate your license to enable this feature. This will automatically occur in the first 24 hours of operation if your Avance system is connected to the internet or can be done manually as explained on the license page.

Modes of Operation

VM Load balancing is set for a VM on its HA Load Balance tab on the Virtual Machines page. The following mechanisms are supported:

- Automatic Load Balancing of VMs. This is an even distribution of VMs across both PMs. When enabled, this feature will generate an alert on the dashboard and a Load Balancing notification on the masthead. Click Load Balance to initiate automatic Load Balancing of VMs. The balance icon on the Virtual Machines page under Current PM column indicates VMs that will migrate imminently.

- Manual Load Balancing of VMs. Users with better knowledge of how their VMs are being used can manually assign a preferred PM for each individual VM, rather than relying on the Automatic policy.

- Automatic/Manual Load Balancing of VMs. The user can manually place one or more VMs on a specific PM and use the Automatic policy for the remaining VMs.

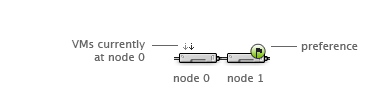

The following visual aid has been added to the Virtual Machine Page for each VM, and illustrates the current status of your VM’s load-balancing state. It provides a quick reference for where the VM is currently running as well as its preference.

Avance policy will ensure that a VM is always running. In the event that one PM is predicted to fail, under maintenance or taken out of service, the VM will run on the healthy PM. When both nodes are healthy a VM will always migrate to its preferred PM.

Performance/Availability Advantages

Enabling HA Load Balancing can result in improved overall system performance of your Avance deployment

- During normal operations, the computing resources of both servers can be used

- Load balancing speeds VM migration times

- Distributes disk reads across both nodes for improved read performance, doubles network bandwidth for writing to disk, and accelerates synchronous write replications.

An additional benefit of HA load balancing is improved availability in the event of a private link failure. The system will ride through these faults by communicating over your 10G synchronization links, or the management link (network0). Note that this feature should not be enabled unless you have a 10G sync link or can ensure adequate bandwidth on network0.

Special Considerations

HA Load balancing relies on having alternate paths for both data synchronization and VM migration traffic. Avance will always choose a 10Gb synchronization link if it is available, followed by the private link (priv0) and finally the management link (ibiz0). If your system is not configured with 10Gb synchronization links, then it is strongly recommended that ibiz0 is not allocated for VM usage to avoid over-subscription of the network under fault conditions as ibiz0 will carry synchronization traffic after a failure of the private link.